llms.txt vs robots.txt vs Sitemap: What Businesses Should Allow

A practical guide for businesses deciding what to allow in llms.txt, robots.txt, and sitemap.xml for SEO, AI search visibility, privacy, crawler control, and technical SEO.

The short version is simple: robots.txt controls which compliant crawlers may request parts of your site, sitemap.xml tells search engines which public URLs matter, and llms.txt gives AI systems a curated plain-text map of your most useful content. A business should usually use all three, but it should not use them for the same job.

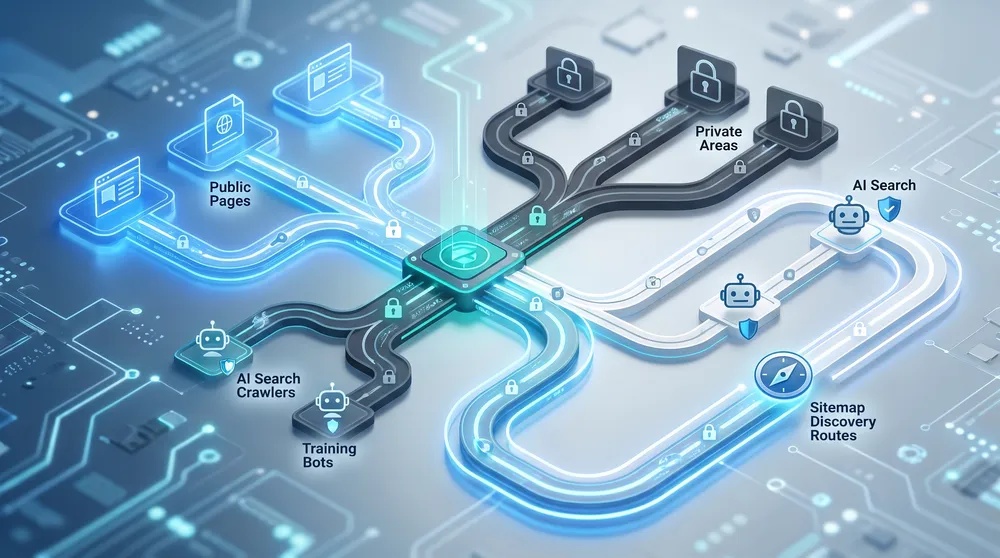

This matters more in 2026 because AI search has split crawler decisions into separate business choices. OpenAI separates OAI-SearchBot for ChatGPT search from GPTBot for model training. Google has Googlebot for Search and Google-Extended as a control token for Gemini-related training and grounding uses, while stating that Google-Extended does not affect Google Search inclusion or ranking. Anthropic documents different Claude bots for model development, search, and user-directed fetching. Perplexity says its crawler respects robots.txt for indexing. Microsoft Bingbot powers Bing discovery and is part of the search ecosystem behind Microsoft AI experiences.

The mistake businesses make is looking for one universal switch. There is no universal switch. The better question is: what do we want discovered, what do we want cited, what do we want used for model training, and what should never be publicly crawlable at all?

Best Default Policy for Most Businesses

For a normal public business website, allow search crawlers, publish a clean sitemap, add a focused llms.txt file, and selectively block training crawlers only if your legal or content strategy requires it.

User-agent: *

Allow: /

Sitemap: https://www.example.com/sitemap.xml

User-agent: OAI-SearchBot

Allow: /

User-agent: GPTBot

Disallow: /

User-agent: Google-Extended

Disallow: /This example keeps the public site crawlable for normal search and ChatGPT search discovery while opting out of some training or AI-product reuse signals. It is not the right answer for every business. A publisher that licenses content may block more. A software company that wants maximum AI-answer visibility may allow more. A private portal should not rely on robots.txt at all; it should use authentication and access control.

The Three Files Do Different Jobs

Use the right file for the right decision. Confusing them creates SEO, privacy, and AI visibility problems.

robots.txt

Controls crawling for compliant bots. It can allow or disallow paths for specific user agents such as Googlebot, Bingbot, OAI-SearchBot, GPTBot, ClaudeBot, and PerplexityBot.

sitemap.xml

Helps search engines discover important public URLs. It should include canonical, indexable pages you want found, not blocked, noindex, duplicate, private, or low-value URLs.

llms.txt

Provides a curated Markdown summary and link map for AI systems. It is guidance for understanding and citation, not an access-control or privacy mechanism.

noindex

Use meta robots or X-Robots-Tag noindex when a crawlable page should not appear in search results. Do not confuse noindex with robots.txt disallow.

Authentication

Use login, permissions, paywall controls, or server-side access rules for content that must stay private. Robots.txt is a request to good crawlers, not a security barrier.

Edge Rules

Use CDN, WAF, bot management, or rate limits when crawler load, scraping, or non-compliant bots create real operational risk.

Options Snapshot

Use these options to decide whether your site needs manual file management, search engine diagnostics, CMS SEO tooling, llms.txt generation, crawl auditing, edge bot controls, or AI visibility monitoring.

| Option | Best for | What it helps with | Watch-outs |

|---|---|---|---|

| Manual implementation | Static sites, custom CMS builds, and teams with technical SEO support. | Create /robots.txt, /sitemap.xml, /llms.txt, and validate them in Google Search Console and Bing Webmaster Tools. |

Simple, but easy to get wrong during migrations, staging launches, URL rewrites, and CMS changes. |

| Google Search Console and Bing Webmaster Tools | Every public business website. | Submit sitemaps, monitor indexing, inspect crawl issues, and test how search engines see important URLs. | They do not create your llms.txt strategy or enforce AI bot policy. They are diagnostic and submission tools. |

| CMS SEO tools, such as Yoast SEO Premium | WordPress sites that want managed XML sitemaps, schema, redirects, internal linking help, and technical SEO guardrails. | Generate and maintain sitemaps, reduce technical SEO mistakes, and keep public content easier for search crawlers to understand. | Most CMS SEO plugins do not replace a deliberate AI crawler policy or a carefully written llms.txt file. |

| llms.txt generators and validators | Teams that want a fast llms.txt draft, validation, sitemap-based regeneration, or simple AI citation checks. | Generate a structured AI-readable file, validate formatting, monitor sitemap changes, and keep the file aligned with public pages. | The llms.txt ecosystem is still emerging. Do not assume every AI system fetches or obeys it consistently. |

| Screaming Frog SEO Spider | SEO teams that need to audit crawlability, robots directives, blocked URLs, sitemap quality, broken links, redirects, and site architecture. | Find URLs blocked by robots.txt, generate XML sitemaps, compare crawls, review meta robots directives, and integrate Search Console data. | Desktop tool. It audits and generates; it does not enforce bot access at the server edge. |

| Cloudflare bot controls and WAF | Sites that need stronger controls for bot traffic, scraping, rate limiting, AI crawler load, and edge enforcement beyond robots.txt. | Bot analytics, WAF rules, rate limiting, verified bot handling, AI bot controls on supported plans, and enterprise bot management for high-risk sites. | Blocking at the edge can also block discovery. Test carefully before blocking search or AI-search crawlers. |

| Semrush SEO and AI Visibility | Marketing teams that want AI visibility, prompt tracking, technical AI-search audit checks, SEO audits, and competitor context. | Find AI search blockers, track mentions and citations, monitor prompts, audit site health, and connect classic SEO with AI search visibility. | It shows visibility and audit signals. It does not replace implementation work in robots.txt, sitemap generation, CMS templates, or edge rules. |

| Ahrefs | SEO teams that need technical auditing, AI visibility signals, prompt tracking, and competitor research in one workflow. | Audit technical SEO, inspect site health, monitor AI visibility signals, track prompts, and research which brands and pages appear across AI and search surfaces. | Plan limits, add-ons, crawl credits, prompts, and extra users affect the best fit. |

Allow Discovery, Limit Reuse, Protect Private Areas

Do not start with a list of bots. Start with a business decision about which content should be discoverable, reusable, cited, hidden, or authenticated.

What Businesses Should Usually Allow

For most public business websites, the safest commercial default is to keep public discovery open while limiting sensitive or low-value content.

Allow Googlebot

Keep Googlebot allowed for public pages you want in Google Search, Google Images, Google News, Discover, and related Search features.

Allow Bingbot

Allow Bingbot for Bing Search visibility and Microsoft search experiences. Blocking Bingbot can reduce discovery across Microsoft surfaces.

Allow OAI-SearchBot

Allow OAI-SearchBot when you want eligible public pages surfaced and cited in ChatGPT search features.

Publish Sitemaps

List canonical, indexable, public URLs in XML sitemaps and reference the sitemap location from robots.txt.

Publish llms.txt

Use llms.txt to summarize your company, services, products, documentation, policies, and important evergreen pages for AI readers.

Protect Private URLs

Use authentication, authorization, noindex, and edge controls for content that should not appear publicly or be crawled.

What to Put in robots.txt

Put crawl access rules in robots.txt. It belongs at the root of the host, such as https://www.example.com/robots.txt. Google states that robots.txt rules apply only to the host, protocol, and port where the file is hosted, so a rule for www.example.com does not automatically cover shop.example.com or a separate staging domain.

Use robots.txt to block low-value crawler paths such as internal search results, faceted parameter traps, cart and checkout paths, admin areas, duplicate generated pages, and staging paths. Use it to state crawler preferences for AI-related user agents. Use it to reference your sitemap.

Do not use robots.txt as a privacy control. A disallowed URL can still be known from external links, server logs, leaked URLs, or browser history. If a page is sensitive, it should require authentication or be unavailable publicly.

Common AI Crawler Choices

- OAI-SearchBot: allow if ChatGPT search visibility matters.

- GPTBot: allow if you are comfortable with content potentially being used for OpenAI model improvement; block if training reuse is not acceptable.

- Googlebot: allow for Google Search visibility.

- Google-Extended: decide separately from Search. Google says it does not affect Google Search inclusion or ranking.

- Bingbot: allow for Bing and Microsoft discovery.

- ClaudeBot and Claude-SearchBot: decide separately if you want to distinguish model development from Claude search visibility.

- PerplexityBot: allow if Perplexity visibility is desirable; block if your content policy requires it.

What to Put in sitemap.xml

Put your important public URLs in your XML sitemap. Google describes a sitemap as a file that provides information about pages, videos, images, and relationships between files so search engines can crawl the site more efficiently. Sitemaps are especially useful for large sites, new sites, sites with poor internal linking, and sites with rich media or news content.

Include only URLs you want crawled and considered for indexing. A clean sitemap should usually exclude blocked URLs, noindex URLs, duplicate parameter URLs, internal search result URLs, expired landing pages, checkout flows, account pages, and staging URLs.

Also keep lastmod honest. Google says lastmod should reflect a significant update to page content, structured data, or links, not a cosmetic timestamp change.

What to Put in llms.txt

Put a curated, human-readable Markdown overview in /llms.txt. The llms.txt proposal describes a concise file that helps language models find useful content without parsing a full website. It commonly includes a title, a short blockquote summary, notes, and sectioned links to important pages.

Use llms.txt for your strongest evergreen pages: services, product documentation, pricing explanations, support docs, case studies, industry guides, locations, policies, and contact or sales pathways. Write descriptions that explain why each page matters. Keep the file short enough to be useful.

Do not treat llms.txt as a magic ranking factor or a legal opt-out file. It is still an emerging convention, not an IETF or W3C standard, and major AI providers vary in what they publicly disclose about fetching it. Its value is that it is cheap, clear, and under your control.

Recommended Setup by Business Type

Local Service Business

Allow Googlebot, Bingbot, OAI-SearchBot, and other search-oriented crawlers. Publish a sitemap with service pages, suburb pages, blog guides, contact pages, and schema-rich landing pages. Add llms.txt with a concise explanation of services, locations, proof points, and best guides.

Ecommerce Store

Allow product and category crawling, but block cart, checkout, account, internal search, filtered duplicates, and low-value parameters. Put canonical products and categories in the sitemap. Use llms.txt to summarize product categories, buying guides, shipping policies, warranty terms, and support pages.

SaaS or B2B Website

Allow public docs, product pages, integrations, pricing, comparisons, case studies, and support docs. Block app dashboards, customer portals, preview environments, and private docs. Use llms.txt to map docs, API pages, use cases, and decision-stage content.

Publisher or Paid Content Site

Separate discoverability from reuse. You may allow search crawlers and selected AI-search crawlers while blocking training crawlers. Put public article URLs in sitemaps, but do not expose subscriber-only content without a clear paywall and licensing strategy.

Make Crawler Policy a Business Decision

Marketing, legal, product, engineering, and leadership should agree on the policy before a developer copies a robots.txt template from the web.

Recommended Setup by Maturity

Foundation

Create the three files manually, submit sitemaps in Google Search Console and Bing Webmaster Tools, use validators, and audit a small site with crawler tools. This is enough for many small business websites.

Managed File Updates

Add a simple llms.txt generator or monitoring tool if your team wants faster generation, validation, and change monitoring. This is useful when the site changes often but does not yet justify a full SEO platform.

Active Monitoring

Add a focused AI visibility platform, SEO audit workflow, or CDN control layer when you need better technical checks, AI-search monitoring, crawl diagnostics, or edge controls.

Governed Operations

Use advanced SEO platforms, CDN governance, and deeper crawler controls if you manage multiple domains, international content, ecommerce catalogues, or publisher risk.

Common Mistakes

- Blocking all AI crawlers without realizing it may reduce AI-search citations and referrals.

- Allowing all crawlers without discussing training reuse, licensing, and content rights.

- Putting private, noindex, or blocked URLs in the XML sitemap.

- Using robots.txt to hide sensitive content instead of authentication.

- Creating llms.txt once and never updating it after site changes.

- Blocking Googlebot resources such as CSS or JavaScript that search engines need for rendering.

- Forgetting that subdomains need their own robots.txt and sitemap strategy.

- Copying AI crawler templates without checking the current official user-agent documentation.

Final Recommendation

Most businesses should allow mainstream search crawlers, publish a clean sitemap, and add a concise llms.txt file for public, high-value content. Then make a separate policy call on model-training crawlers. If you want maximum AI discovery, allow more AI-search crawlers. If your content has licensing or IP sensitivity, block training crawlers while keeping search visibility open where vendors support that separation.

The winning setup is not "allow everything" or "block everything." It is a documented policy that says which public content should be discovered, which content should be cited, which content can be reused, and which content should never be public in the first place.

Sources Checked

- llms.txt specification reference

- llmtxt.info - llms.txt status, limitations, and examples

- Google Search Central - robots.txt reference

- Google Search Central - sitemaps overview

- Google Search Central - build and submit a sitemap

- Google common crawlers and Google-Extended

- OpenAI crawler documentation

- Anthropic ClaudeBot documentation

- Perplexity robots.txt documentation

- Microsoft Support - how Bing delivers search results

- Bing Webmaster Blog - robots.txt tester

- Screaming Frog SEO Spider product information

- Yoast SEO Premium product information

- Semrush AI Visibility Toolkit documentation

- Semrush SEO Toolkit account documentation

- Ahrefs product information

- Cloudflare plan information

- Cloudflare Enterprise Bot Management