Should You Block AI Crawlers?

A practical business guide to blocking, allowing, and monitoring AI crawler controls across robots.txt, Google-Extended, GPTBot, OAI-SearchBot, ClaudeBot, PerplexityBot, Cloudflare, Semrush, Ahrefs, Fastly, Akamai, and DataDome.

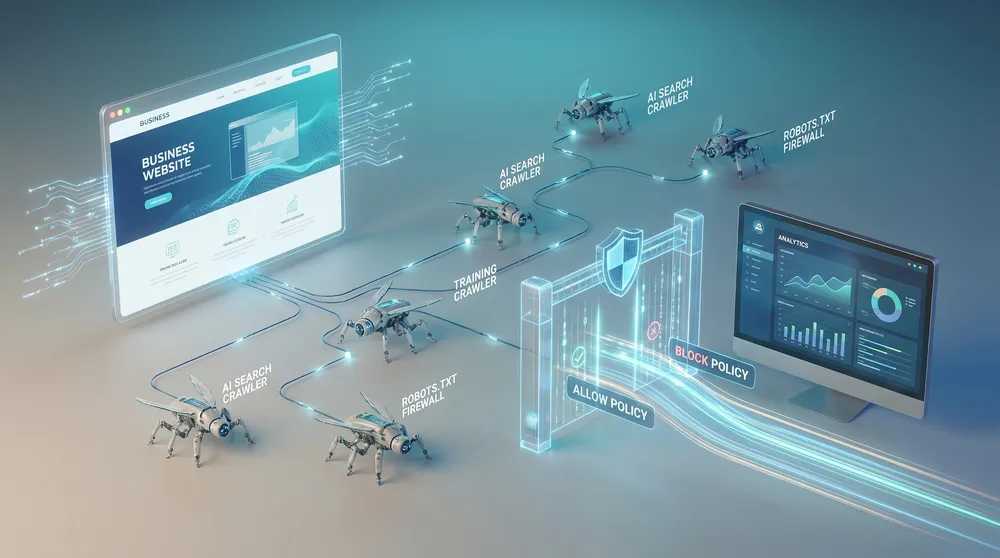

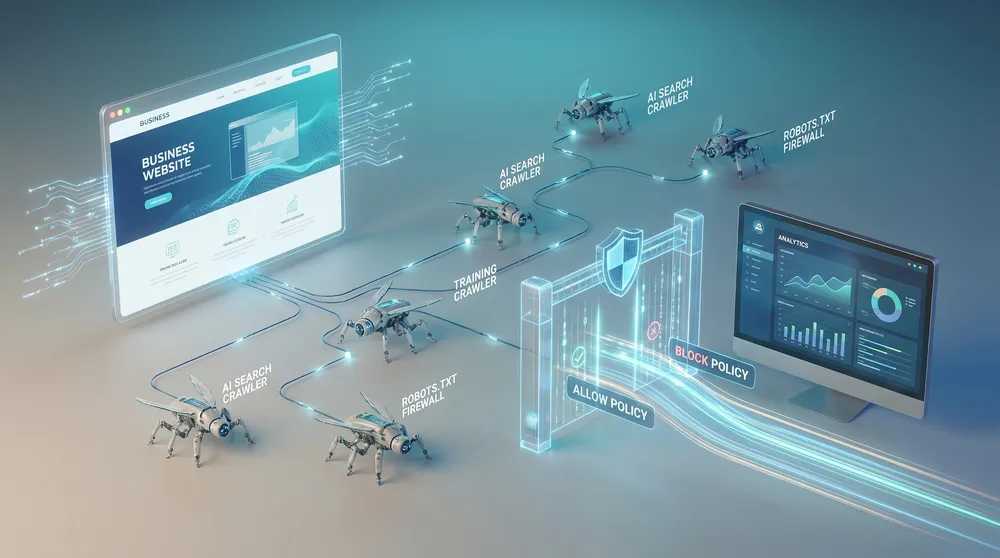

Blocking AI crawlers sounds simple until you look at what different AI bots actually do. Some crawl to train foundation models. Some crawl to surface your website in AI search answers. Some fetch pages only when a user asks a chatbot to visit a URL. Some obey robots.txt. Some need firewall-level enforcement if you are serious about protection.

For most Australian businesses, the best policy is selective. Allow crawlers that can send visibility, citations, and referral traffic. Block or limit crawlers that mainly collect public content for model training when there is no clear business benefit. Monitor server logs so the policy is based on evidence instead of fear.

This guide covers the main options, the practical risks, and the decision points businesses should work through before blocking or allowing AI crawlers.

Do Not Treat Every AI Bot the Same

The right crawler policy depends on whether the bot is helping customers discover you, training future models, fetching pages for a user, or wasting server resources.

Search Visibility

Allow crawlers that can surface and cite your pages in AI search experiences, such as OAI-SearchBot, PerplexityBot, Googlebot, Bingbot, and relevant search bots.

Model Training

Consider blocking training-focused crawlers such as GPTBot, ClaudeBot, CCBot, and Google-Extended when your public content has licensing, IP, or competitive sensitivity.

User Fetching

Be careful with user-triggered agents such as ChatGPT-User or Claude-User. Blocking them can stop users from asking an assistant to retrieve your public page.

Private Content

Do not rely on robots.txt for confidential material. Use authentication, paywalls, no public URLs, server rules, and legal terms for anything that must stay private.

Crawl Cost

If AI crawlers are increasing bandwidth, cache misses, origin load, or analytics noise, add rate limits, WAF rules, cache strategy, or bot management.

Measurement

Track bot requests, AI search referrals, cited pages, crawl errors, server cost, conversion quality, and whether blocking changes visibility in AI answer engines.

Options Snapshot

Use these options to decide whether you need a simple robots.txt policy, selective crawler rules, CDN controls, enterprise bot management, or AI visibility monitoring.

| Option | Best for | What it controls | Limitations |

|---|---|---|---|

| Manual robots.txt policy | Most small and medium businesses starting with a controlled AI crawler policy. | Allows or disallows compliant crawlers by user-agent, including GPTBot, OAI-SearchBot, Google-Extended, ClaudeBot, Claude-SearchBot, PerplexityBot, CCBot, Googlebot, and Bingbot. | robots.txt is a request to compliant crawlers, not a security boundary. It does not stop bad actors, copied datasets, screenshots, browser-based agents, or already-crawled content. |

| Selective allow/block strategy | Businesses that want AI search visibility but do not want all public content used for training. | Allows AI search and citation crawlers while blocking training-oriented crawlers or low-value scrapers. | Requires ongoing updates because bot names, product uses, and crawler behaviour change over time. |

| Cloudflare AI Crawl Control | Businesses that want dashboard visibility, crawler-by-crawler control, robots.txt compliance monitoring, and edge enforcement. | Monitor AI services, set allow or block rules for individual crawlers, track robots.txt compliance, and explore Pay Per Crawl where available. | Some bot, WAF, analytics, and enterprise features may depend on plan and configuration. Pay Per Crawl is private beta. |

| Cloudflare WAF, Transform Rules, logs, and cache controls | Sites with bot load, origin cost, suspicious user agents, or repeated requests that ignore robots.txt. | Blocks or challenges traffic at the edge, rate limits heavy crawlers, preserves cache performance, and validates whether requests are coming from known bot infrastructure. | Rules need careful testing so you do not block Googlebot, Bingbot, payment providers, uptime monitors, or legitimate users. |

| DataDome Bot Protect | Large publishers, marketplaces, ecommerce platforms, and sites where bot abuse, scraping, fraud, or AI agent traffic has a real financial impact. | AI-powered bot detection, fraud protection, AI crawler and agent controls, and monetization features on higher tiers. | Often too heavy for most small businesses unless crawler abuse is already creating measurable revenue, infrastructure, or legal risk. |

| Fastly AI Bot Management | Businesses already on Fastly or needing edge-level AI bot detection, blocking, rate limiting, deception, and policy control. | Detects confirmed and suspected AI bots, applies allow/block/rate-limit policies, and helps protect performance and content value. | Requires sales engagement, integration planning, and security tuning. |

| Akamai Bot Manager and Content Protector | Enterprise media, ecommerce, travel, finance, and high-traffic platforms that need advanced bot scoring and AI/LLM crawler policy enforcement. | Bot scoring, edge controls, allow/monitor/challenge/throttle/block actions, scraper protection, and monetization or licensing workflows. | Enterprise-grade capability with enterprise-grade procurement and implementation effort. |

| Semrush AI Visibility Toolkit | Marketing teams that need to measure whether crawler settings are hurting AI visibility. | AI visibility, prompt tracking, competitor gaps, brand performance, and site audit checks for technical blockers that may prevent AI bots from accessing content. | Measurement tool, not an enforcement layer. It does not replace robots.txt, WAF rules, or server-side access controls. |

| Ahrefs Brand Radar and Custom Prompts | SEO teams tracking whether AI crawler access changes mentions, citations, source pages, and competitor visibility. | AI Overview visibility, AI assistant mentions, citations, search demand, competitor comparisons, and custom prompt tracking. | Useful for measurement and discovery, but it does not decide or enforce crawler access. |

Allow Discovery, Limit Training, Protect Sensitive Assets

A balanced policy lets AI search engines discover public service pages while limiting training use, repetitive scraping, and access to content that should not be freely copied.

AI Crawler Control Checklist

Use this checklist before changing robots.txt or firewall rules.

Classify Bots

Group crawlers into search, training, user-triggered fetchers, research archives, commercial scrapers, and unknown automated traffic.

Keep Search Open

Do not accidentally block Googlebot, Bingbot, OAI-SearchBot, PerplexityBot, Claude-SearchBot, or other crawlers you depend on for discoverability.

Block Training

Block training-oriented crawlers where content licensing, paid content, competitive IP, privacy, or brand risk outweighs possible AI exposure.

Protect Private

Use authentication, paywalls, server-side access controls, and no public URLs for sensitive content. robots.txt alone is not protection.

Monitor Logs

Track request volume, crawl paths, response codes, cache misses, source IPs, user agents, referrals, and conversions after policy changes.

Revisit Monthly

Review bot documentation, AI referrals, citations, crawl load, and business outcomes because AI crawler ecosystems change quickly.

Recommended robots.txt Starting Point

This is a starting framework, not a universal rule. It keeps traditional search and AI search discovery open while blocking common training or dataset crawlers. Adjust it for your business model, content risk, and current crawler documentation.

User-agent: GPTBot Disallow: /

User-agent: ClaudeBot Disallow: /

User-agent: CCBot Disallow: /

User-agent: Google-Extended Disallow: /

User-agent: OAI-SearchBot Allow: /

User-agent: PerplexityBot Allow: /

User-agent: Claude-SearchBot Allow: /

User-agent: Googlebot Allow: /

User-agent: Bingbot Allow: /

User-agent: * Disallow:OpenAI's crawler documentation separates OAI-SearchBot for ChatGPT search visibility from GPTBot for foundation-model training. Anthropic similarly separates ClaudeBot, Claude-User, and Claude-SearchBot. Perplexity says PerplexityBot is for surfacing and linking websites in Perplexity search results, not foundation model training. Google-Extended is a standalone robots.txt token for controlling whether Google-crawled content may be used for future Gemini model training and grounding in Gemini products; it is separate from Googlebot.

That separation matters. If you block every AI-related user agent, you may reduce the chance of your public service pages being cited in AI search answers. If you allow every crawler, you may be giving away content for training, competitor research, or high-volume scraping without a clear return.

When You Should Allow AI Crawlers

Allow AI-related crawlers when discoverability matters more than exclusion. This is usually true for service businesses, ecommerce category pages, local business pages, SaaS product pages, help articles, public documentation, comparison pages, and educational content written to attract qualified customers.

For these pages, blocking AI search crawlers can be self-defeating. If ChatGPT search, Perplexity, Copilot, Gemini, or other AI answer engines cannot crawl or retrieve your content, they may cite competitors, directories, marketplaces, review sites, or outdated third-party summaries instead.

When You Should Block or Limit AI Crawlers

Blocking makes sense when the content has more extractive value than discovery value. Examples include paywalled analysis, member-only resources, high-value research, proprietary databases, legal templates, product feeds, pricing intelligence, unpublished documentation, content licensed from third parties, and pages that create heavy origin load without useful traffic.

For confidential or regulated content, do not depend on robots.txt. Use access controls. robots.txt can tell a compliant crawler not to fetch a URL, but it does not remove the URL from the public internet, stop human copying, enforce contracts, or prevent non-compliant automation.

The noindex Trap

If your goal is to keep a page out of Google Search, Google says noindex must be visible to Googlebot. If the page is blocked by robots.txt, Googlebot may never see the noindex directive. Use robots.txt for crawl control. Use noindex for indexing control. Use authentication for privacy.

What to Do About ChatGPT-User and Claude-User

User-triggered agents are different from automatic crawlers. OpenAI says ChatGPT-User may visit a page when a ChatGPT or Custom GPT user asks for it, and robots.txt rules may not apply because the action is initiated by a user. Anthropic says Claude-User supports Claude users accessing websites through user queries.

Blocking these agents can make sense for paywalled, legal, account, or sensitive pages. For public marketing pages, support documentation, and product pages, blocking them may frustrate a real prospective customer who is using an assistant to research your business.

Measure Before and After You Block

The strongest policy is evidence-led: check crawler load, referral quality, AI citations, server cost, and leads before making permanent changes.

Recommended Setup by Risk Level

Baseline Policy

Create a documented robots.txt policy, allow traditional search crawlers, selectively allow AI search crawlers, block obvious training crawlers where needed, and review server logs manually. Use Google Search Console and Bing Webmaster Tools for baseline crawl and search visibility checks.

Edge Controls

Use CDN or edge controls for visibility and crawler-level rules. This is usually the most practical step when you need more than robots.txt but do not need enterprise bot management.

Visibility Monitoring

Add AI visibility monitoring when AI search visibility, citations, prompt tracking, or competitor comparison is a monthly KPI. These tools help you see whether blocking decisions are helping or hurting discovery.

Enterprise Protection

Consider enterprise bot management when scraping, bot abuse, fraud, licensing, or infrastructure load is material enough to justify security procurement.

Decision Matrix

| Business situation | Recommended policy |

|---|---|

| Local service business with public service pages | Allow search and AI search crawlers. Block training crawlers only if you have a specific IP or licensing concern. |

| Publisher with premium analysis or paid content | Keep paywalled content behind authentication, block training crawlers, monitor user-triggered fetchers, and consider edge enforcement. |

| Ecommerce store with product feeds and dynamic pricing | Allow Googlebot and Bingbot for shopping/search where needed, rate-limit or block high-volume scrapers, and protect feeds with access controls. |

| SaaS company with public documentation | Allow AI search and user-triggered retrieval for public docs, block training crawlers where licensing matters, and monitor support-ticket deflection. |

| Enterprise with high bot traffic or legal exposure | Use robots.txt as policy signalling, but enforce with WAF, bot management, logging, contract terms, and security review. |

Common Mistakes

- Blocking all AI crawlers and then wondering why competitors appear in AI search answers.

- Blocking Googlebot or Bingbot while trying to block AI features.

- Assuming robots.txt protects private or paid content.

- Using noindex on a page that is blocked from the crawler that needs to see the noindex tag.

- Forgetting that old copies may already exist in public datasets such as Common Crawl.

- Not measuring AI referrals, cited pages, server load, or conversion quality before and after changes.

- Failing to verify bot identity before blocking by user-agent alone.

- Letting a one-time robots.txt update become stale as crawler documentation changes.

Final Recommendation

Most businesses should not blanket-block AI crawlers. Start by allowing search and citation crawlers, blocking training crawlers where content risk matters, protecting sensitive content with real access controls, and monitoring the effect on visibility and server cost.

If your website depends on organic discovery, the goal is not to disappear from AI systems. The goal is to be visible on your terms: open where you want leads, closed where you need protection, and measured well enough to change policy when the evidence changes.

Sources Checked

- OpenAI crawler documentation

- Google robots.txt documentation

- Google crawler and Google-Extended documentation

- Anthropic crawler documentation

- Perplexity crawler documentation

- Common Crawl CCBot FAQ

- Microsoft Bing search and generative AI documentation

- Cloudflare AI Crawl Control documentation

- Cloudflare plan information

- DataDome product information

- Fastly AI Bot Management

- Akamai Bot Manager

- Semrush AI Visibility Toolkit documentation

- Ahrefs Brand Radar documentation